Azure Stream Analytics is an event-processing engine that allows users to analyze high volumes of data streaming from devices, sensors, and applications. Azure Stream Analytics can be used for Internet of Things (IoT) real-time analytics, remote monitoring and data inventory controls. However, Azure Stream Analytics is another component in Azure, that we were able to run Machine Learning on it. There is a possibility to consume the machine learning model API created in Azure ML Studio inside Azure Stream Analytics for the aim of applying machine learning to streaming data coming from sensors, applications, and live databases. In this post and the next one, I will show how to use machine learning inside Azure Stream Analytics. First, a gentle introduction to Azure Stream Analytics will be presented. Then, a simple example of an Azure ML Studio API that is going to be applied to the stream data will be displayed.

Azure Stream Analytics Environment

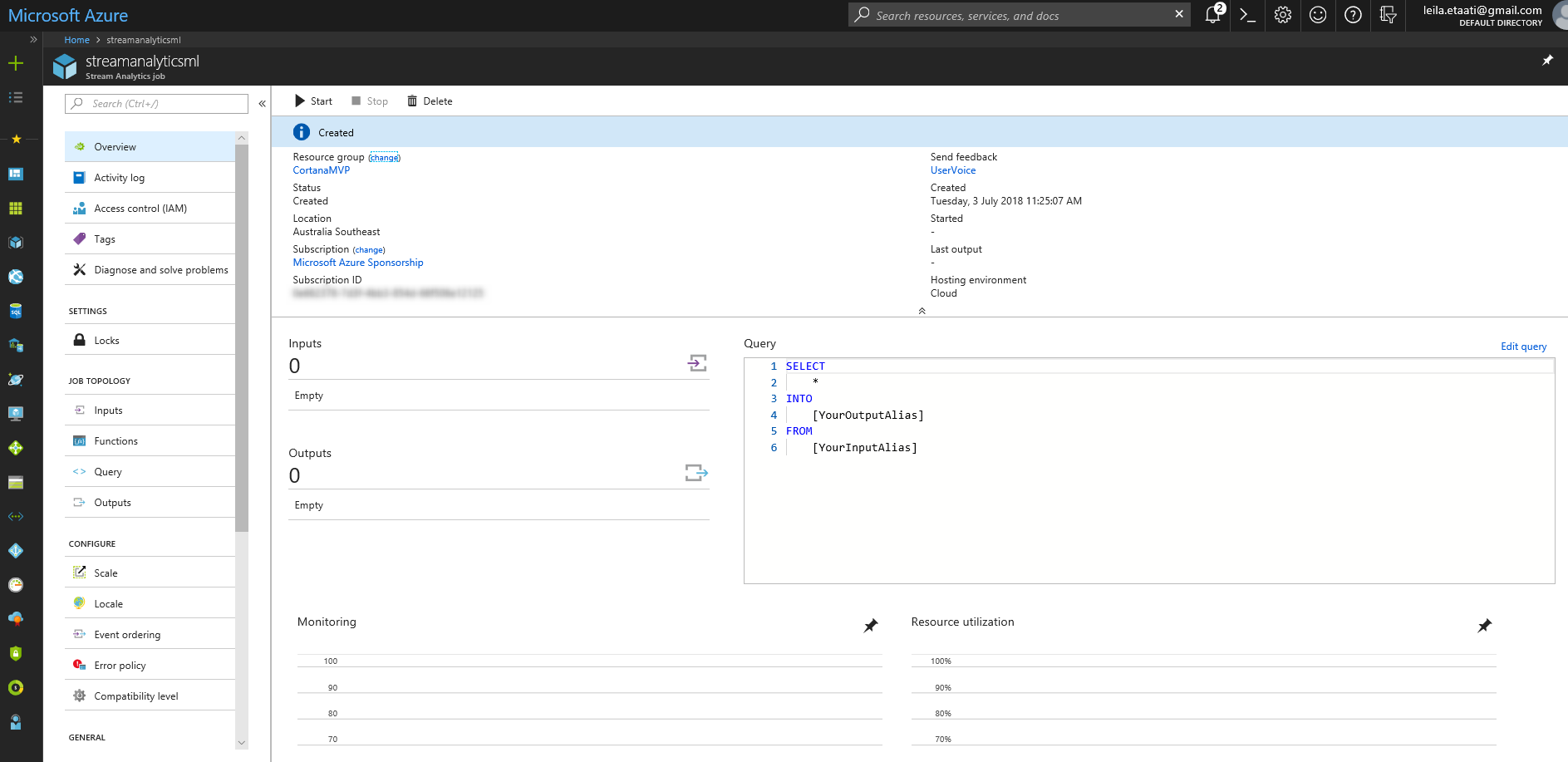

Azure Stream Analytics is an event-processing component in the Azure environment. To create Azure Stream Analytics, need to login in the Azure account and create and create a Stream Analytics Job.

After creating Stream Analytics Job in Azure, you need to set up some predefined parameters such as the Job Name, Subscription, Resource Group and so forth. Stream Analytics is like a service bus inside Azure, able to fetch data from some components and apply some analytics on received data and pass it to other components. As a result, stream analytics environment contains three main components as Input, Output, and Query. Stream Analytics able to fetch data from just three main components: Event Hub, IoT Hub, and Blob Storage. These three components mainly use for collecting data from external sensors, applications, and live APIs. Stream Analytics able to pass data to some more Azure components such as Blob Storage, Azure SQL database, Power BI and so forth. Finally, the last important component is a Query Editor, that helps users to apply some analytics on received data before sending them to output.

The language which is used in Stream Analytics is Stream Analytics Query Language that so similar to SQL Scripting.

Case Study

In this case study, I will show how to get data from an application via Event Hub, then pass the collected data into Stream Analytics, apply machine learning and then show the live result in Power BI Services.

The process has been explained in above picture, as you can see in the figure, there is an application that sends live data to Event Hub, Stream analytics received the live data, apply an Azure ML API model on the received data and then send it to Power BI. In power BI report, the end-user able to see the live data that beside the result of machine learning that applied to it.

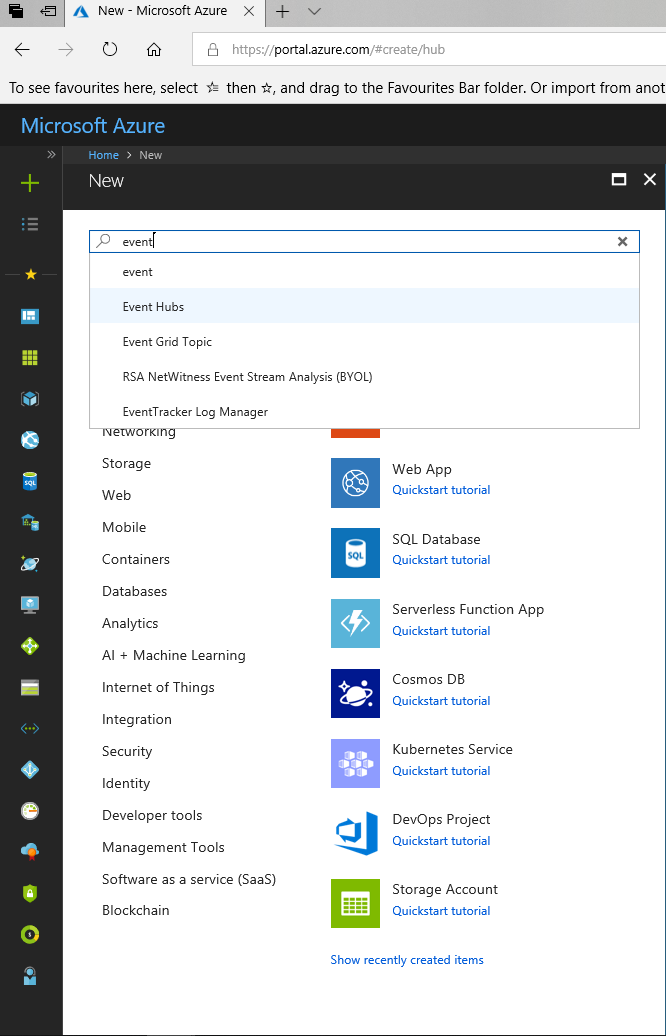

Event Hub

The first step is to create an Event Hub service in the cloud. To create an Event hub service, follow the same process for creating stream analytics. Create a new service and provide some information.

When you create an Event Hubs service, you able to add a new Event Hub for your service.

Application

The second step is to create an application that generates some sample data. The application is a sample.Net framework application that auto-generate some random data. This application has been created by Reza Rad [1]. Follow the process in his post to create one.

Azure Stream Analytics

The first step is to create a Stream Analytics Job in Azure.

After creating stream analytics, there is a need to set it up the Input, Output, and Query. As mentioned before, stream analytics able to get data from some Azure components, Stream analytics able to get data from three main Azure components and then pass stream data to the other resources such as Power BI, SQL Database and so forth. The transformation query leverages a SQL-like query language that is used to filter, sort, aggregate, and join streaming data over a period of time[1].

In this case study, we are going to get data from out Event Hub and then apply machine learning on it and then pass it to Power BI to see the live data with applied analytics.

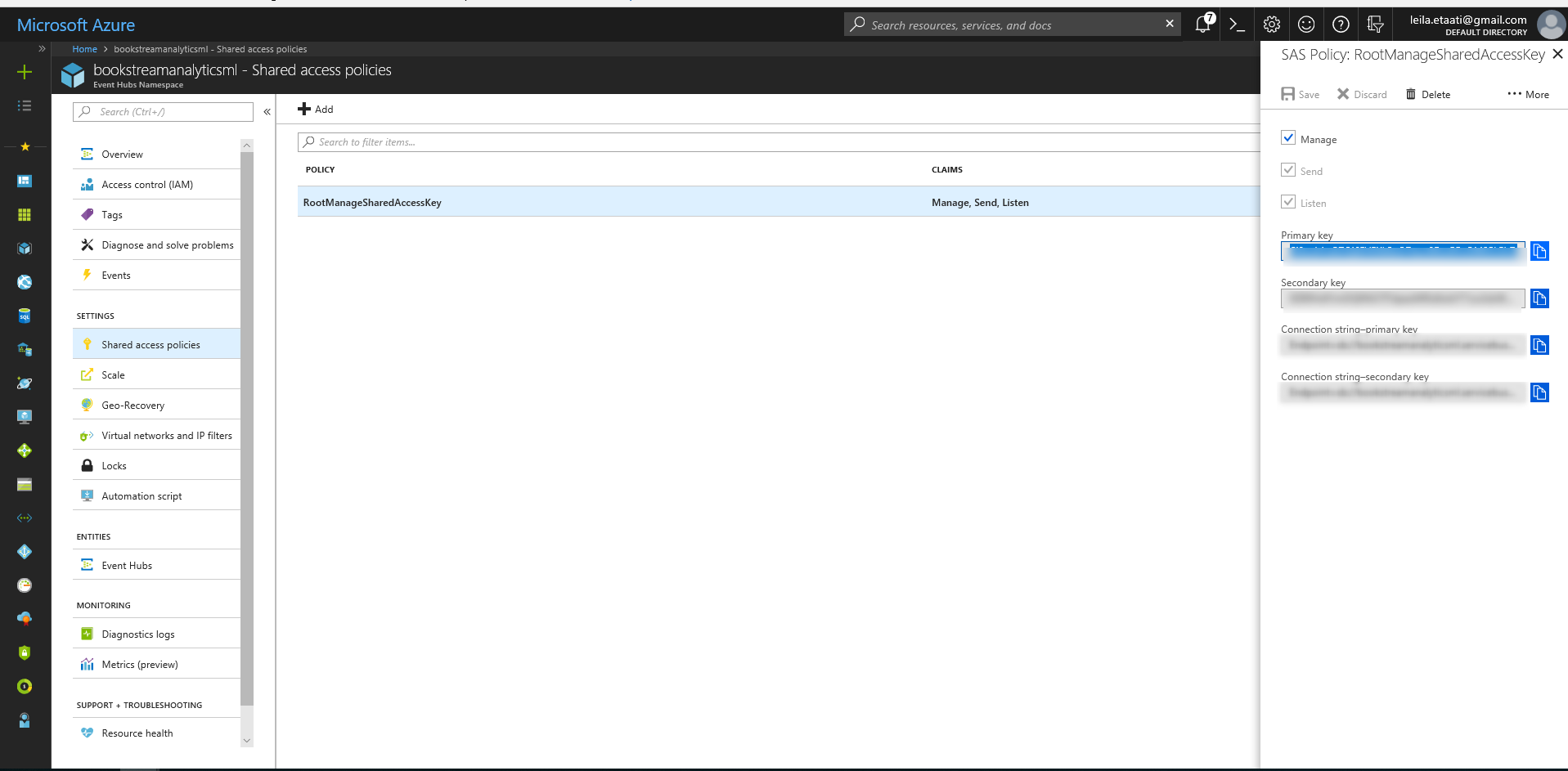

To Create input, click on the Input option in the main page of Stream Analytics. After creating an Input for stream analytics, we need to create an Output following the same process. After creating stream analytics input and output, before creating the query, we need to setup some keys and name in the.Net code. Click on the Stream Analytics components, then on Shared Access Policies. Click on the RootManageSharedAccessKey then copy the Primary Key.

Azure ML

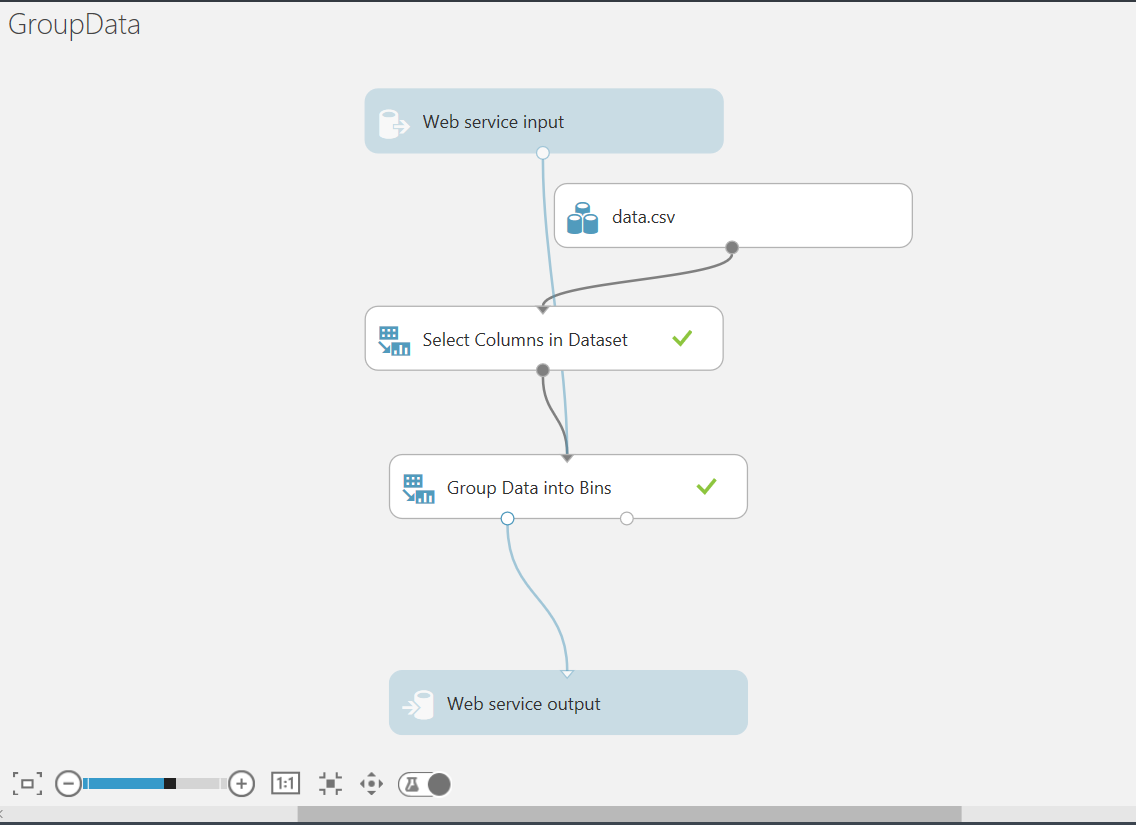

In Azure ML Studio, I have created a simple model for grouping data. The main aim of this model is to fetch data from the application, then group them into four main groups. Next, create an API so stream analytics able to pass data to it and get the group number and the real value. Import the sample data that the application is going to generate. Then drag and drop Select Columns in Dataset component to select Value column. Finally, leverage another component name Group Data into Bins.

Follow the below steps to generate an API for this model.

Run the model using the Run option at the bottom of the page.

Click on the Setup Page to create input and output for the API.

Then, Run the model again and click on the Deploy Web Service

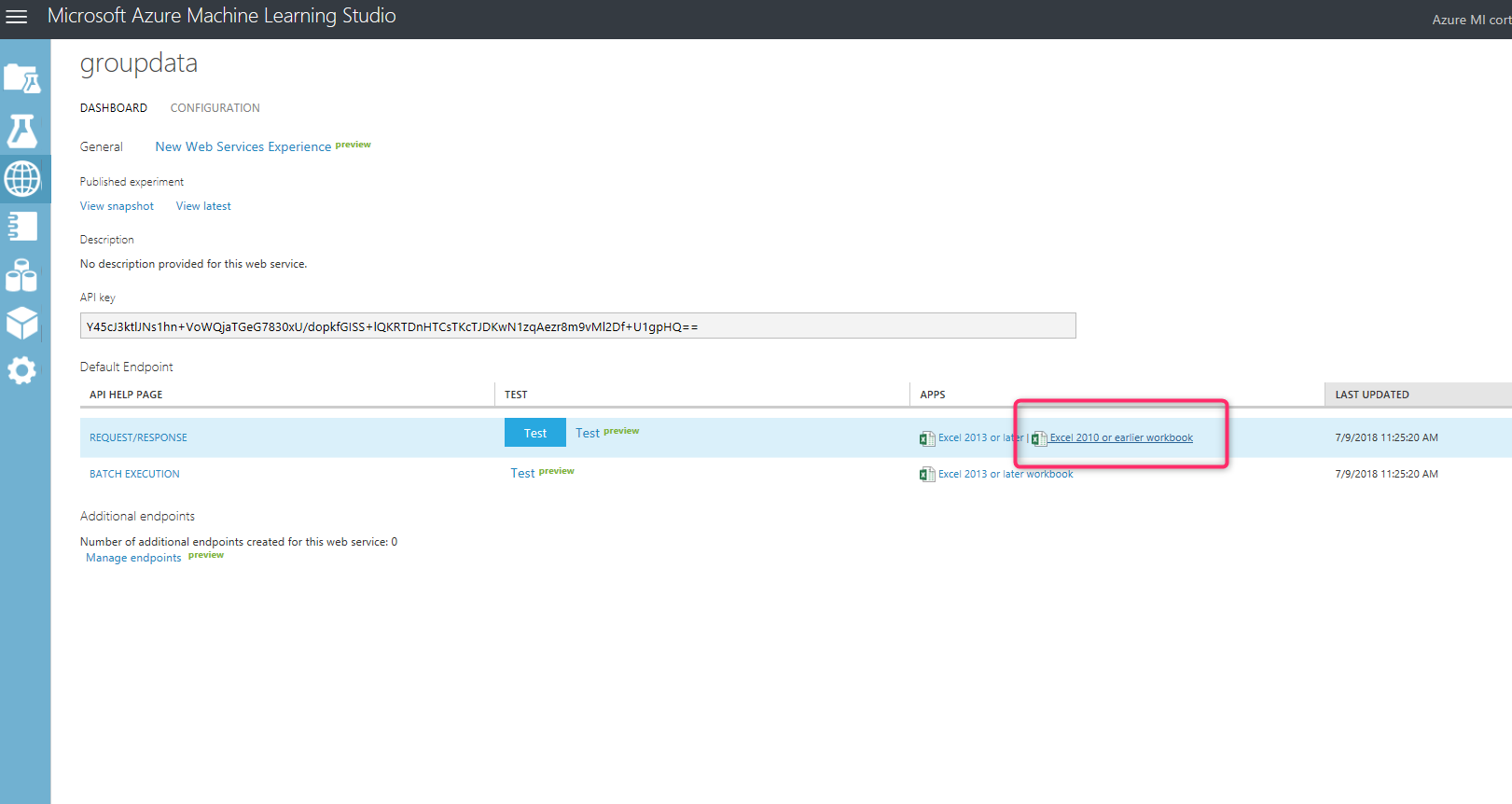

After creating a web service and the new page will be shown. In the new page in the middle of the page, the API key for the web service is shown.

To connect from Stream Analytics to the Azure ML API, we need to click on the APPs (at the bottom of the page), Then Click on the Excel 2010 or earlier workbook.

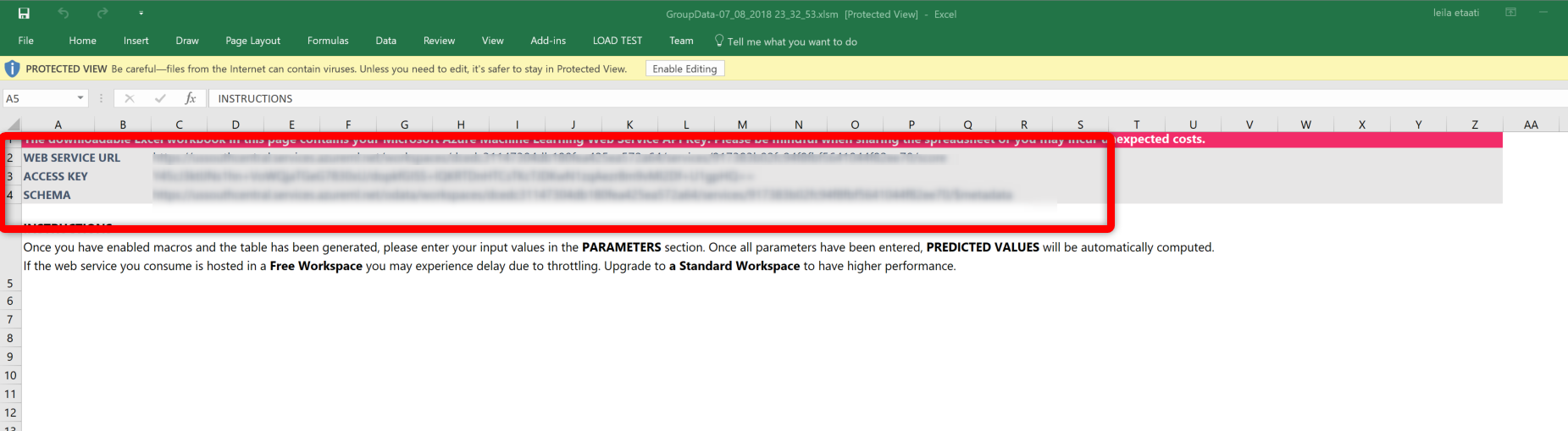

A new Excel workbook will be downloaded. Open the Excel file then, at the top the page, you will see the Webservice URL and Access Key.

Copy the code Webservice URL and the Access Key.

Now, we have the keys and URL, we need to set up a function in Stream Analytics to access the created model in Azure ML Studio.

In the next Post, I will explain how to create a stream analytics function. and how to applied Azure ML API on streamed data.

[1] http://radacad.com/stream-analytics-and-power-bi-join-forces-to-real-time-dashboard