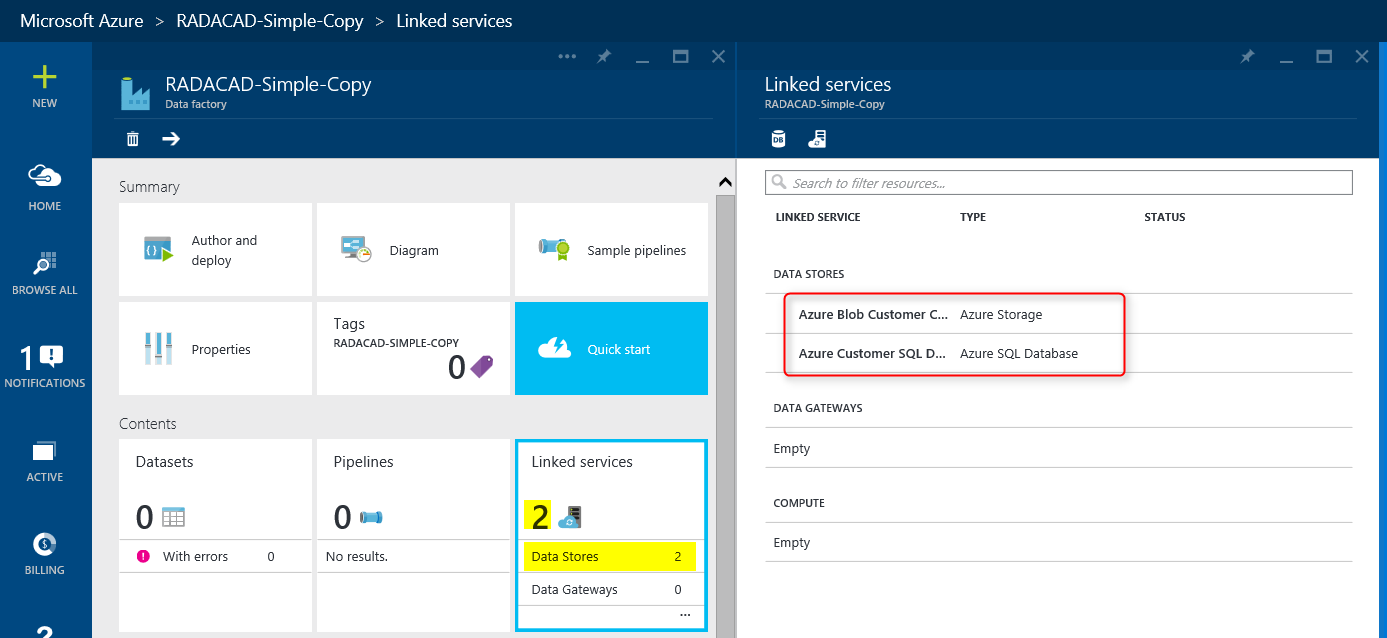

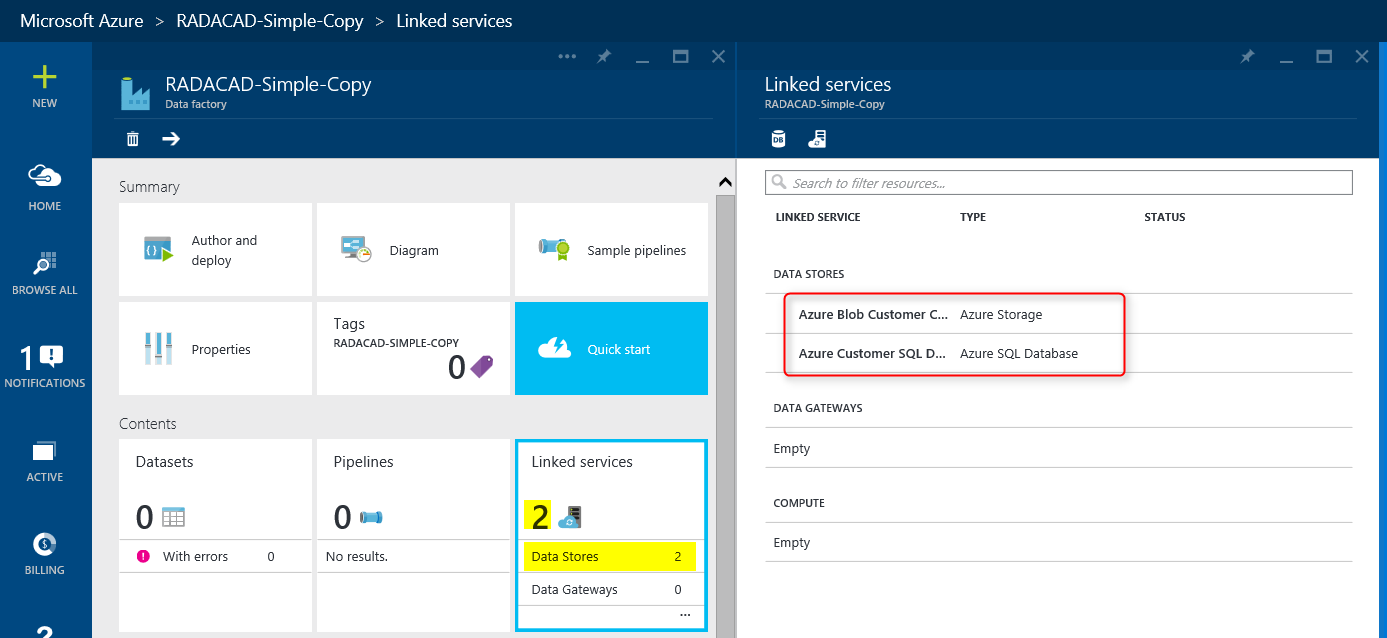

Previously in another post I explained what is Azure Data Factory alongside tools and requirements for this service. In this post I want to go through a simple demo of Data Factory, so you get an idea of how Data Factory project builds, develops and schedules to run. You may see some components of Azure Data Factory in this post that you don’t fully understand, but don’t worry, I’ll go through them later on in future posts.

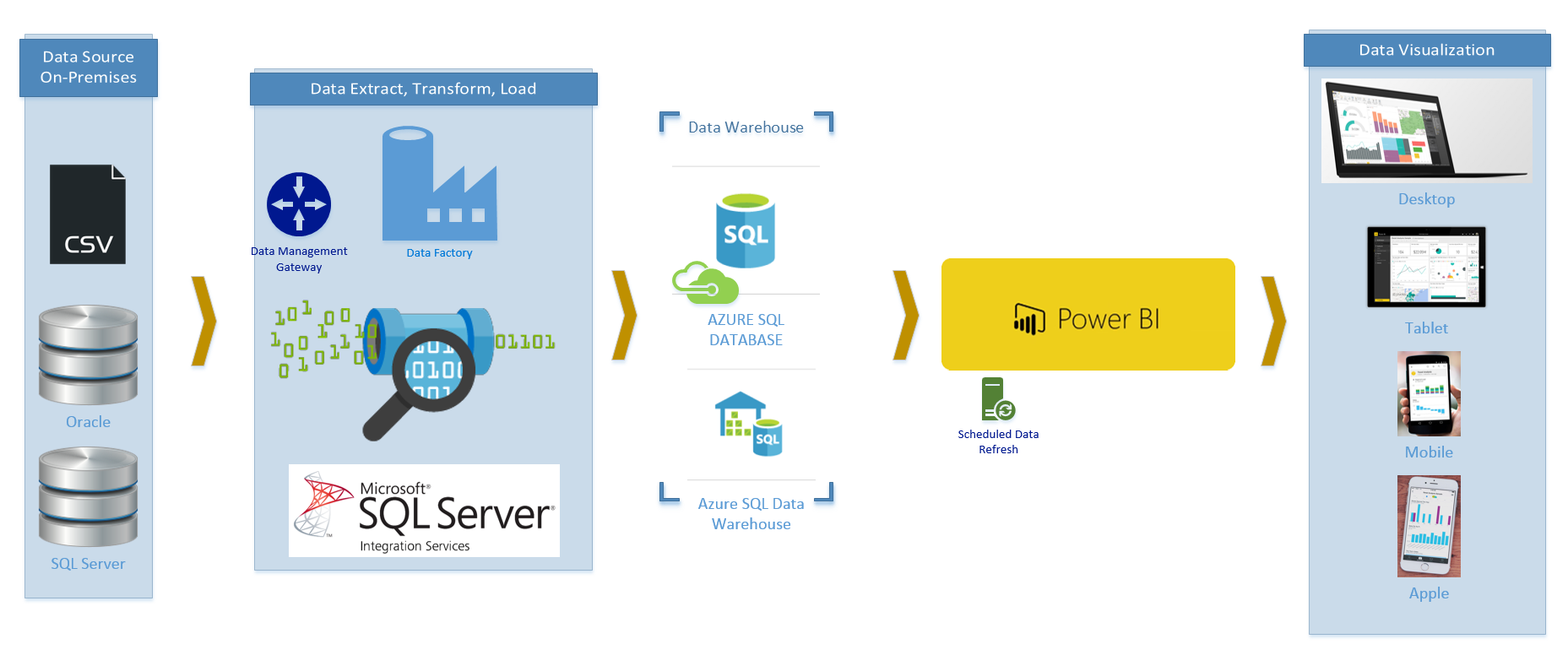

An overview from previous section; Azure Data Factory is a Microsoft Azure service to ingest data from data sources and apply compute operations on the data and load it into the destination. The main purpose of Data Factory is data ingestion, and that is the big difference of this service with ETL tools such as SSIS (I’ll go through difference of Data Factory and SSIS in separate blog post). With Azure Data Factory you can;

- Access to data sources such as SQL Server On premises, SQL Azure, and Azure Blob storage

- Apply Data transformation through Hive, Pig, and C#.

- Monitor the pipeline of data, validation and execution of scheduled jobs

- Load it into desired Destinations such as SQL Server On premises, SQL Azure, and Azure Blob storage

- And on last but not least; This is Cloud based service.

[…]