Azure Data Catalog; What to Expect this Monday?

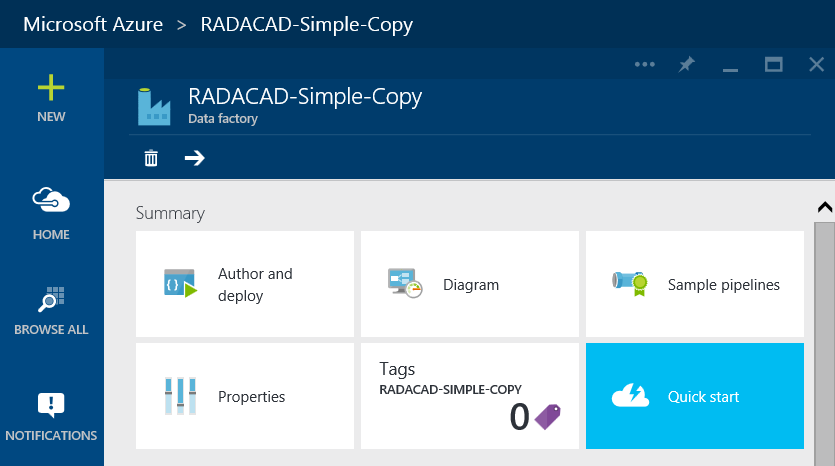

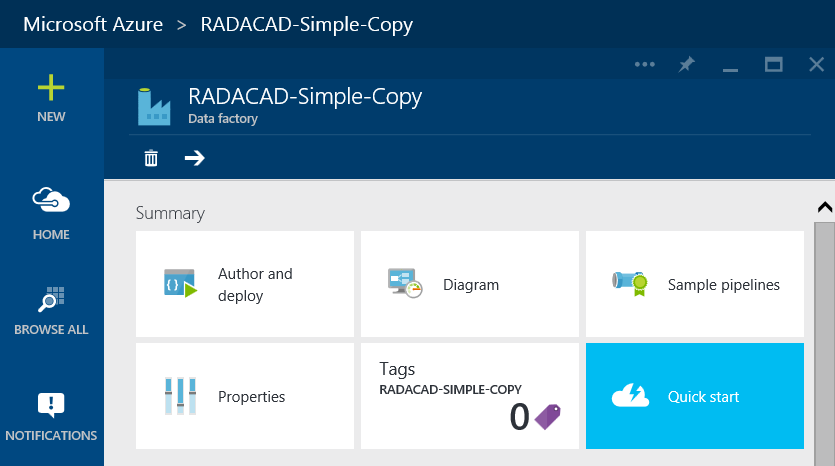

Thrilling news of public preview availability of Azure Data Catalog spread the word from Joseph Sirosh’s blog post yesterday; Azure Data Catalog will be available for public preview this Monday 13th of July. This is great step forward for using metadata alongside tools for extracting and visualization of the data. I would like to share my thoughts about what is a data catalog and what to expect from it on Monday.

What is Data Catalog; Data Catalog contains metadata related to the data source, metadata can contains tags, comments, descriptions, annotations…. about data source, tables, views, indexes, and all other objects in the data source. Most of you worked with databases (readers of my blog are database pros usually 😉 ). In all database environments there are two sides; business and IT (or let’s say owner, producers, or consumers of the database technology). Business usually understand concepts, while IT understand database structure. Metadata stored in a data catalog is the connection between these two.