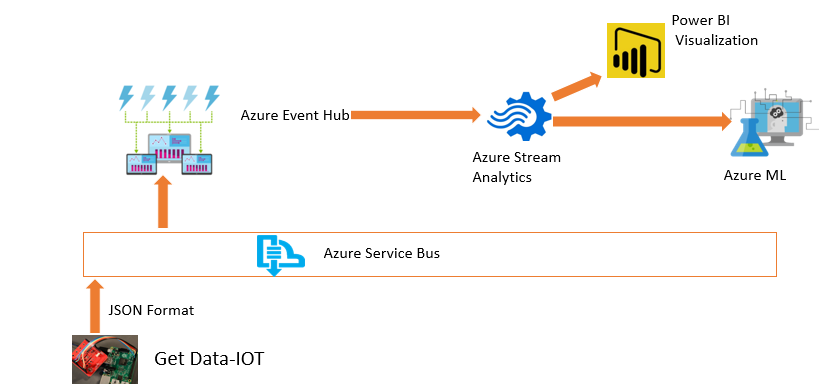

In previous posts (part 1 and part 2), I have explained the process of data live streaming. In the first post, I have explained the process of setting up the Raspberry PI 3 and Weather station. In the second one, I have explained the process of setting up the Event Hub that helps us to. In this post, I will explain the process of creating and setting up the Stream Analytics.

What is Stream Analytics?

Stream Analytics is an event processing engine, which helps to send data from Event Hub to Power BI and Azure ML.

In this post I will explain how to use Stream Analytics to see live data in Power BI.

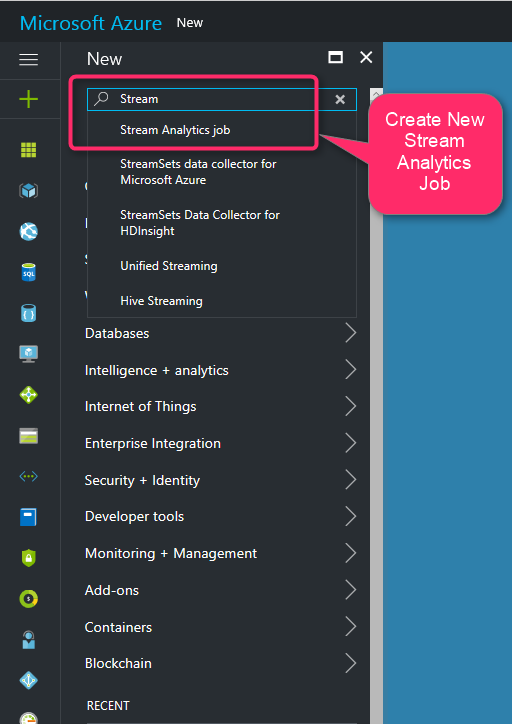

To create Stream Analytics, I login in new Azure Portal. In the search area I typed” Stream”, then I clicked on “Stream Analytics Job”

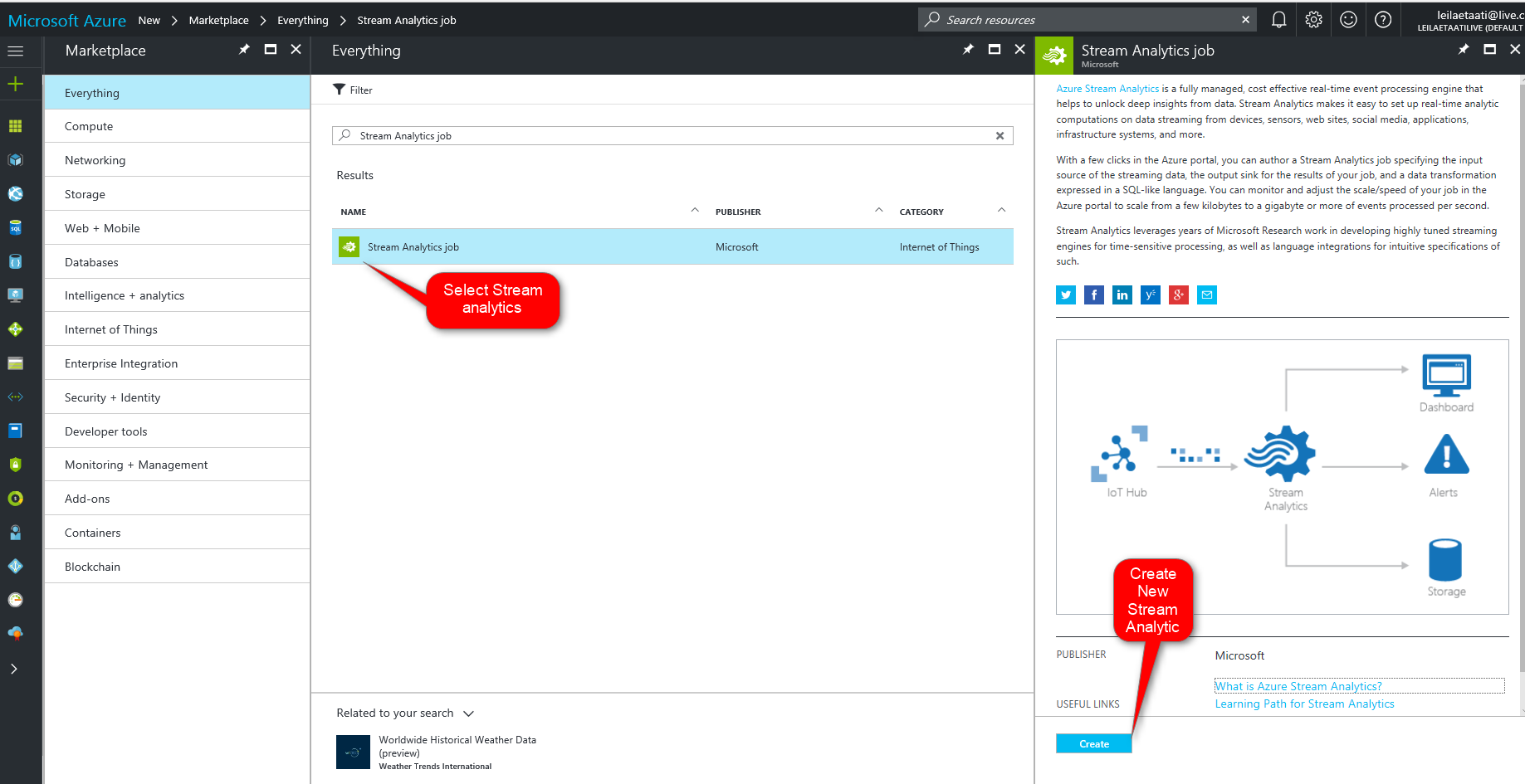

Then in “Name” area I clicked on “Stream Analytics Job”. By clicking on this icon, a new page will be appear. In this new page click on “Create” to start creation of New Stream Analytics.

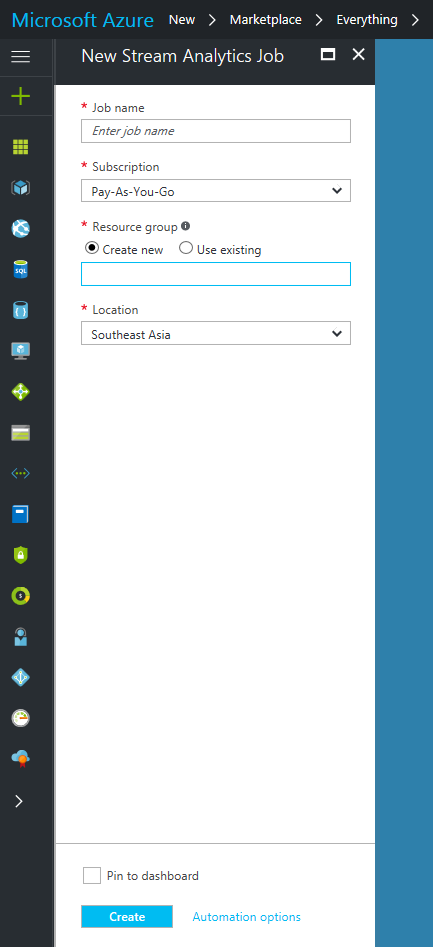

By clicking on create a new analytics, new page will be shown that we have to provided some information such as “Job Name”, “Subscription” that you already selected. Moreover, we need to identify the new resource group. Finally, we should identify the location that we want to set up the stream analytics server.

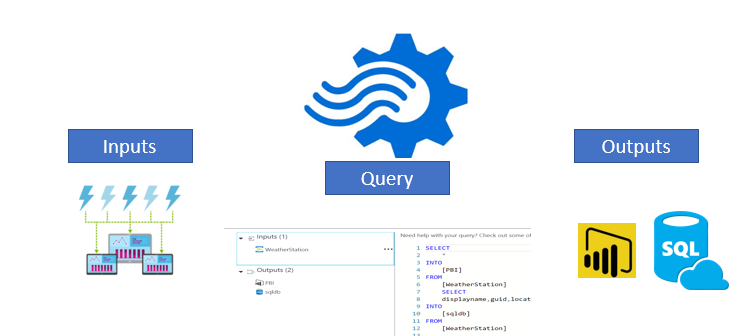

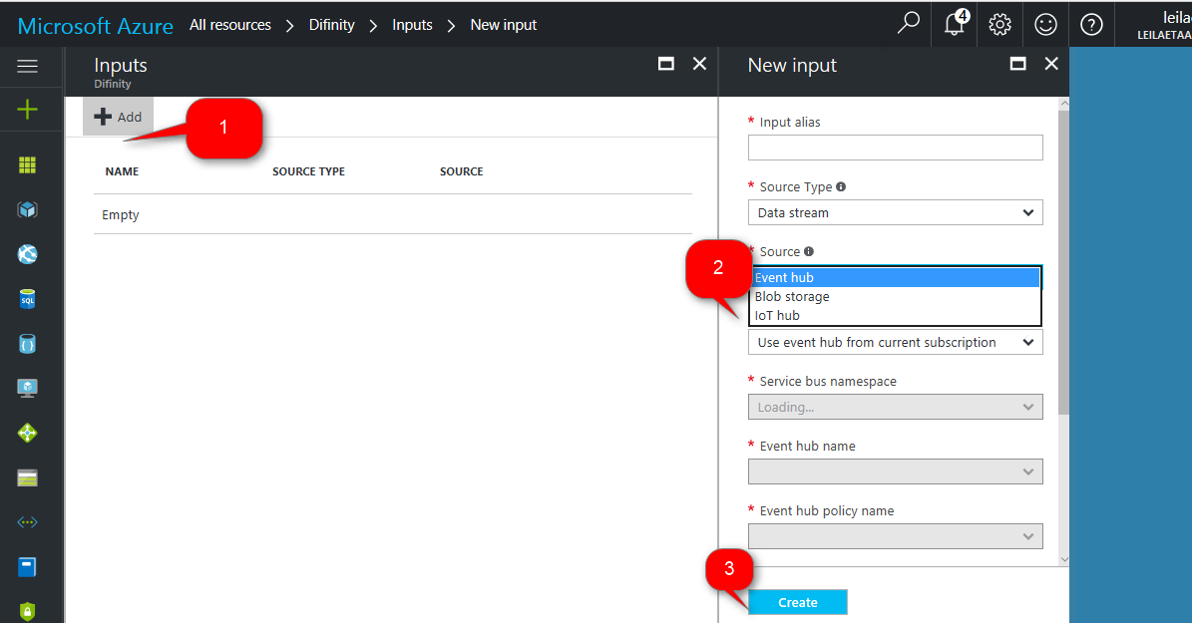

Stream Analytics accepts input from Event Hub, Blob Storage and IoT Hub. Stream analytics able to received data from these inputs.

Then we should write a query to sent the data from inputs to outputs. Stream Analytics able to send data to : Power BI, Azure SQL Server, Azure Data Lake Store, Blob storage, Table Storage, and Document DB.

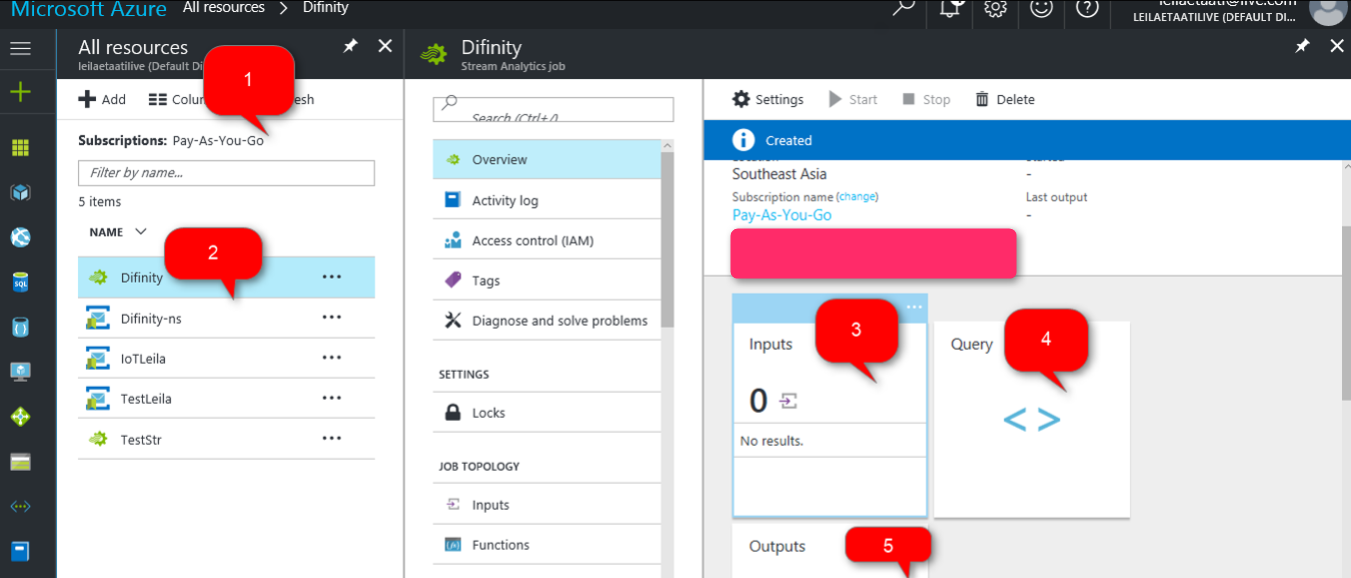

The query is “Stream Analytics Language” which is so similar to SQL statement. After creation a new stream analytics, we should specify the input and output for it. we specify the Input, Output, and Query by clicking on each of them.

after clicking on “Input” icon, we need to specify the input media. In our scenario “Event Hub” is the main input to get data. Hence, we specify the services and create the input.

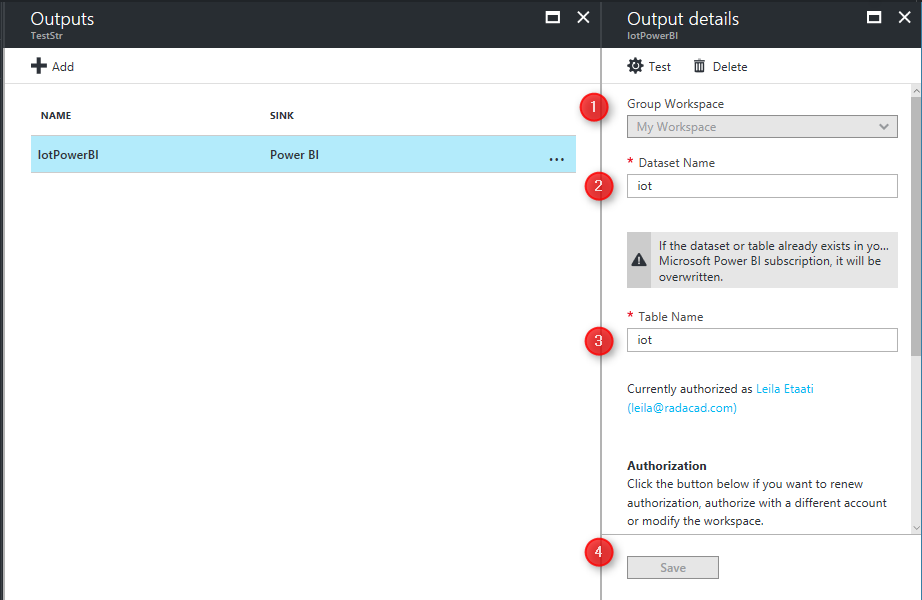

After Creating Input, we also have to select the Outputs. The output will be Power BI. By selecting the Power BI. In output we just need specify the Group workspace, Dataset Name and Table Name. Stream analytics will create automatically table in Power BI with the name that we allocated here(see below image). However the data attributes will be identified in Stream analytics Query.

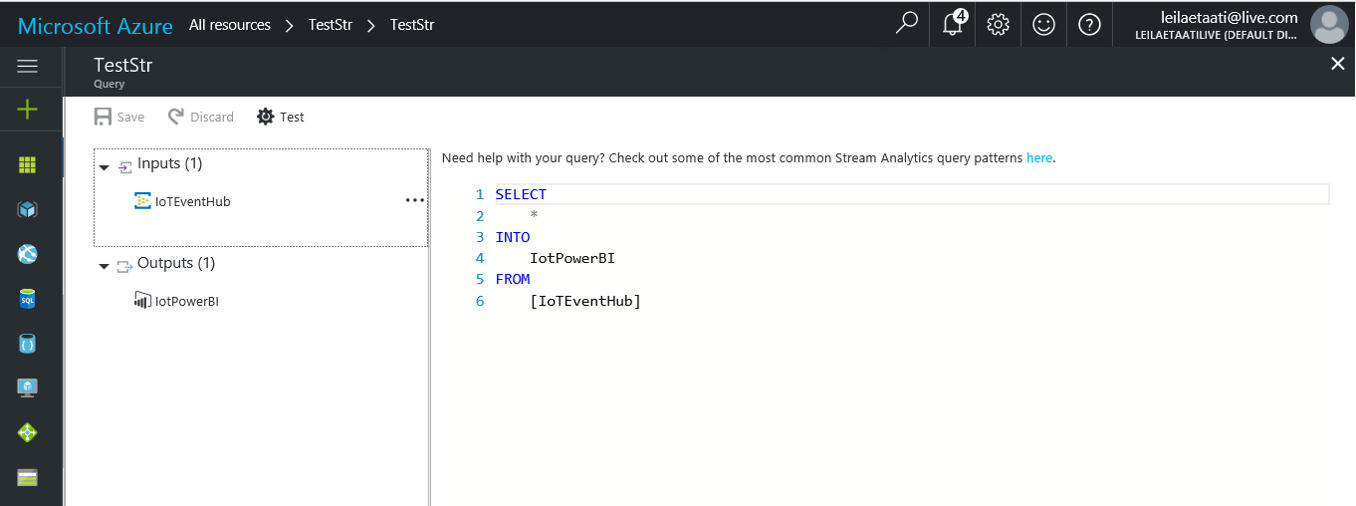

Stream Analytics Query is so similar to SQL Statement (See below image).

In this scenario, we want to get all data from Event Hub. Hence, we use “Select *” then in from clause we choose “IoTEventHub” that means all data have been collected from weather station. Then, we insert data from “IoTPowerBI”.

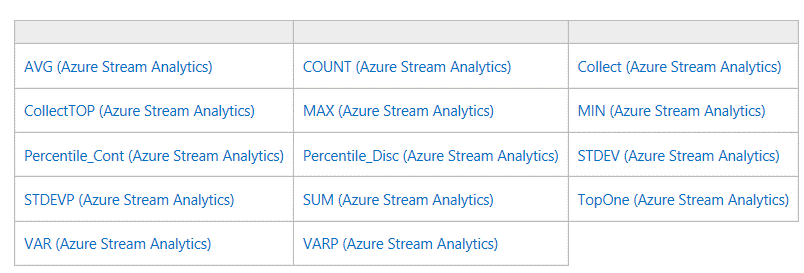

In Stream Analytics Query, we can also use the Built-in Function. In the below image you can see the all possible functions.

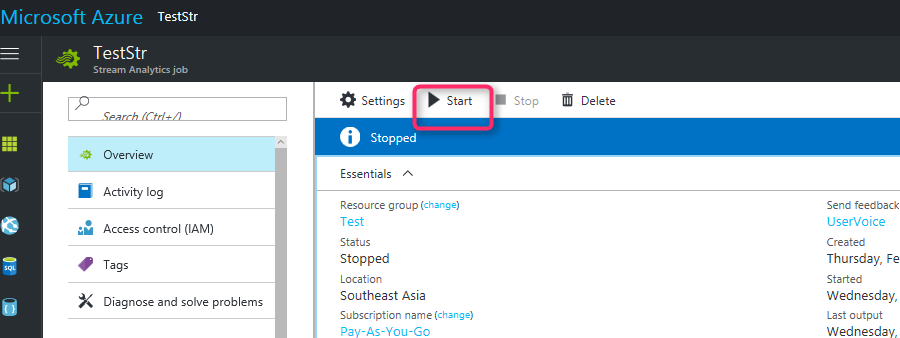

After creation Stream Analytics, we need to run the program(.Net Program that we already have it, Part1) and then start the Stream Analytics. To fetch data from event hub we have to start the stream analytics service (see below image)

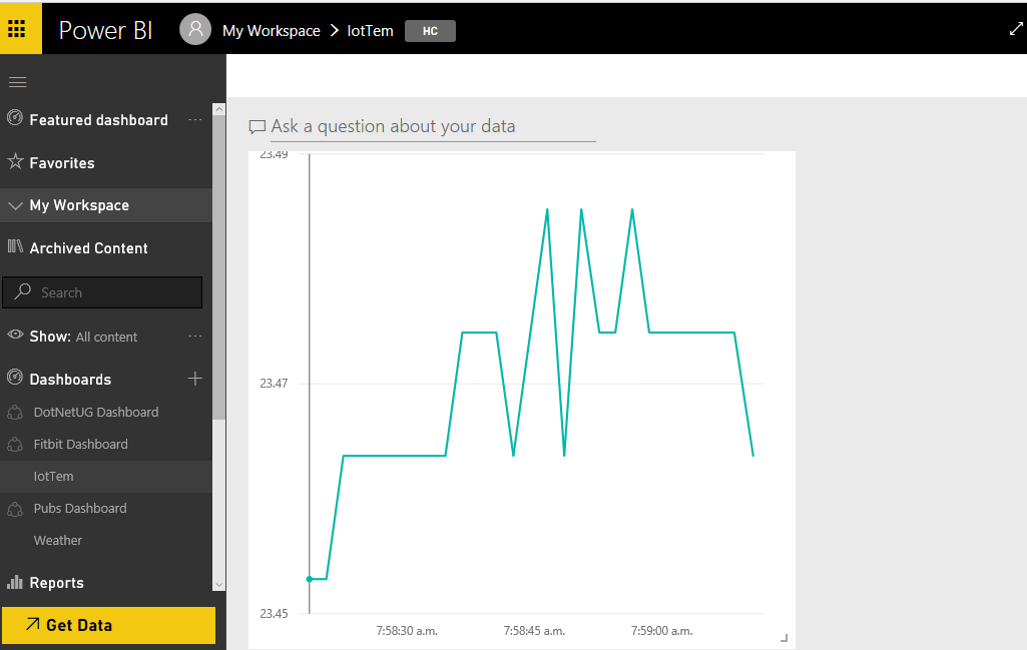

Data will be send to the Power BI. As you can see in the below image. By running the Stream Analytics. We will see below diagram in Power BI.

First we have to run the .Net code to get data from weather sensor. The data will be send from Net program to Event Hub. Below video shows the process. After that data will be send to the Stream Analytics. Stream Analytics will send data to Power BI. below video show the process.

After creation live data in Power BI.

There is a possibility to send the collected data to Azure ML and do more analytics. In the next post I will explain how to create prediction analytics in Azure ML for identifying the anomaly in the collected data from weather sensor.

[1]. https://docs.microsoft.com/en-us/azure/stream-analytics/stream-analytics-introduction.

[2]. https://www.hackster.io/windowsiot/build-hands-on-lab-iot-weather-station-using-windows-10-5b818f.