Azure Data Lake store is one of the components in Microsoft cloud, that helps the developers, data scientists and analyst to store any data size, shape, and speed. Azure Data Lake is optimized for processing large amounts of data; it provides parallel processing with optimum performance. In Azure data lake we can create hierarchy data folder structure. Because these capabilities, Azure Data Lake make easy for data scientists to apply advanced analytics and machine learning modeling with high scalability cost-effectively. Azure Data Lake Analytics includes U-SQL, that is a language like SQL that enables you to process unstructured data [1]. There is a possibility to do machine learning inside Azure Data Lake, explore the Azure Data Lake from R Studio to create model inside R Studio environment. Moreover, there is a possibility to get data from Azure Data Lake using Hive query and use that data inside Azure Machine Learning. In this chapter, you will see how we can write and works with Data using USQL language with R in Azure Data Lake and how we can import data from Azure Data Lake to R studio or import data from R studio into Azure Data Lake.

Azure Data Lake Environment

Azure Data Lake is one of the components of Microsoft Cloud for the aim storing the data. As you can see in the below Figure, the second component in Azure Portal is about storing data. Azure Data Lake store is a storage that able to store data with any size, format (structured and unstructured). Moreover, for doing analytics (third component). Azure Data Lake Analytics can be used with the aim of data analytics (using USQL) and machine learning.

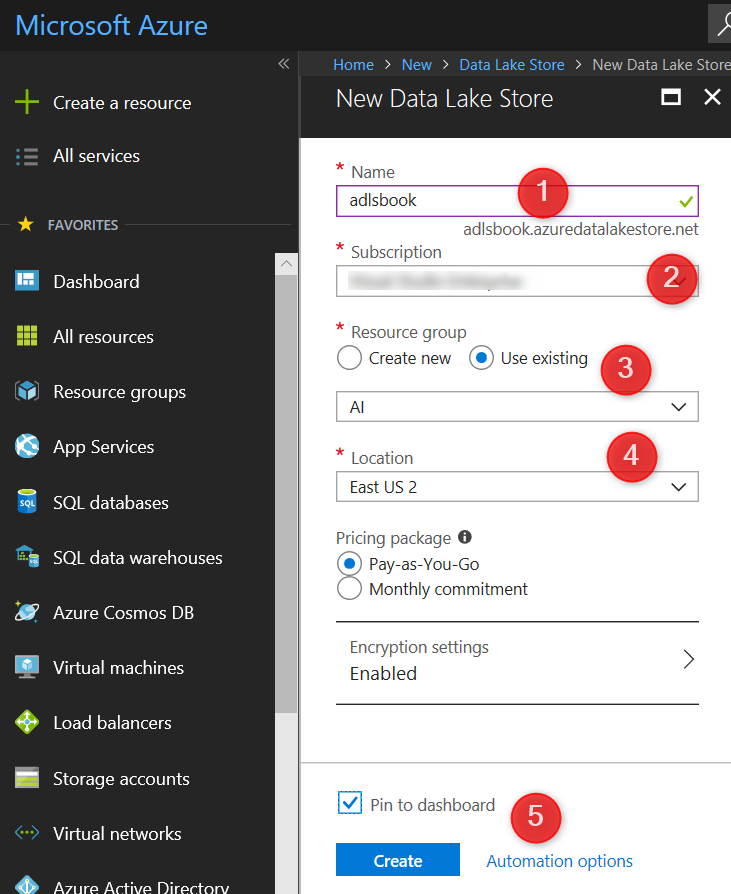

To access the Azure Data Lake store and Analytics, you need to have an Azure Portal account. To create one please follow the link in [2]. Sing into “Portal.Azure.com”. Then, click on the Create a resource icon on the left side of the portal. By typing the “data lake”, two different components for Azure Data Lake will show. To start, we are going first create “Azure Data Lake Store”.

By click on the create option, first, you need to provide some information such as the name of service, subscriptions, resource group, and the local server.

It takes a while to create components. As you can see in the above figure, the Azure Data Lake Store has been created. After creation of Azure Data Lake store, we can explore the data to upload new data there.

By clicking on the “Data Explorer” option, you able to see the data and structure there. As you can see in the above figure, there is the possibility to upload data, create folders, define the access level and so forth.

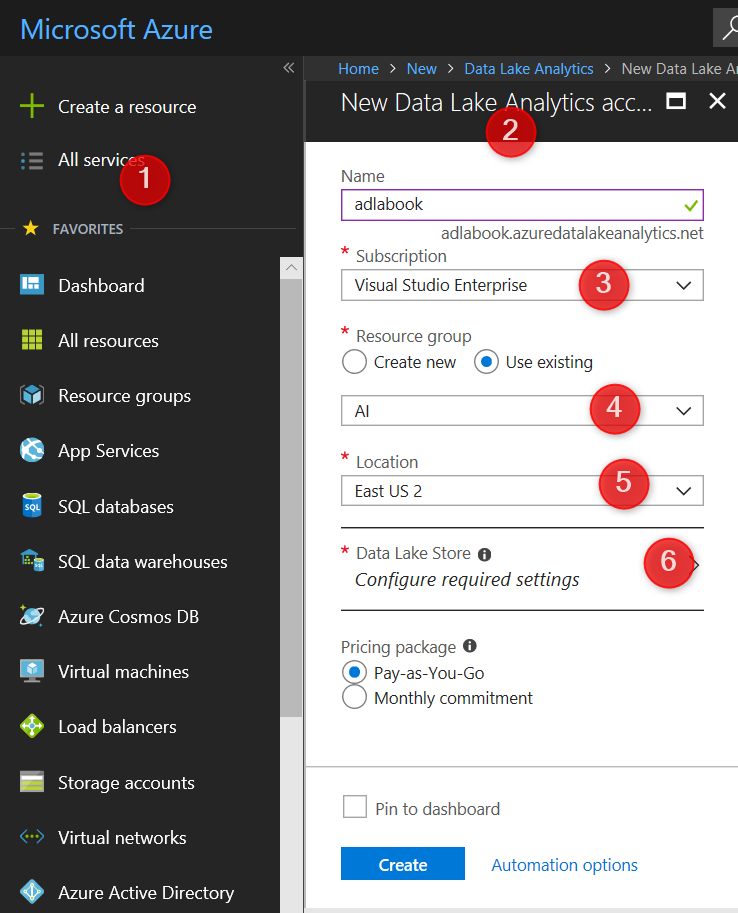

Currently, there is no data in Azure Data Lake Store. There is a possibility to import data from another Azure component to Data Lake Store. For the aim of machine learning in this session, first, we are going to create a sample of data via Azure Data Lake Analytics. For this aim, we need to create a Data Lake Analytics resource. We follow the same process as we did for Data Lake Store. However, we need to specify what Data Lake Store we are going to use. Please note, always it is matter first create Data Lake Store then create Data Lake Analytics.

After specifying the name, location, related Data Lake Store, subscriptions and so forth, you need to wait till the component created and shown in Azure dashboard. Just click on the created Azure Data Analytics (adlabook). Data Lake Analytics can be useful for develop, run massive parallel data, transforming and processing data in U-SQL, R, Python, and.Net. To start we are going to import some sample data and codes. On the top of the page, click on the “Sample Scripts” option.

In sample Scripts page, there are two main sample data: “Sample data missing” and “U_SQL Advanced Analytics”. We need the second one, to access to the sample data and codes. The sample data will be stored in Data Lake Store.

To enable to write the U-SQL and Importing some sample data and codes, click on the “U-SQL Advanced Analytics” option. Following, about 2.5 GB will be downloaded into Azure Data Lake Store. After the installation starts, you able to see the process of enabling U-SQL and importing data in “install U-SQL Extensions” page. The installation process has been shown as a pipeline which may take around 2 minutes to run.

Just close the page and click on the “adlabook” and click on the “Data Explorer” on the top of the page.

You can browse the Data Lake Store folders. Under the adlsbook, there is a folder name that under it there are tow folders with name R and Python. Click on the R folder to see the combination of the data, usql files, zip files and so forth.

Click on one of the usql files in R folder such as ExtR_PredictUsingLinearModel_RScript.usql. by clicking the files, you able to see the related usql files contained in a separate page as shown in the above figure . As you can see in the below picture, the usql scripts are so like SQL language. There is a possibility to download the codes, rename the files, check the file format, provoke or grant access to file vis Access control and so forth. IN the next section I will explain USQL language and how to write R codes or Python inside it.

I will talk about how we can explor and run the codes in the next post

Thanks,

I will follow your posts to learn more, at the moment I’m a BI graduate in Perth, Australia.

Thanks so much!