Time travel is now available in Fabric Warehouse, which was previously only possible in Lakehouse. This means that you can all be Dr. Strange and use your time stone to travel through time and look at a snapshot of the data at that point in time. It is an amazing feature, and it is so simple to use that you can’t believe it. I’ll explain what it is and how it can be used in this article.

Video

What is Time Travel, and why did you need it yesterday?

Data changes throughout time, especially in the world of BI and data warehousing systems; the data gets updated through ETL processes frequently. This means that the data you see in the warehouse today might differ from yesterday and the day before, and so on. Some parts of this data can be retrieved on a timely basis. You can, for example, query the sales amount from the sales table where the date has been the 2nd of April. That would give you the sales amount for the 2nd of April, even if you are querying it on the 23rd of May.

However, what if some of the sales transactions on the 2nd of April got updated? The sales amount you see would likely be the updated amount, but not the original amount. It is sometimes useful to be able to see what was that original amount, or in other words, travel in time and see what that value was.

This is helpful in many scenarios. Often, in Power BI, we work with financial data, and users would like to see how this value was at the end of last month or the month prior. The ability to time travel is immensely valuable for comparing values, troubleshooting, performance analysis, trend analysis, machine learning, and many other useful scenarios.

Fabric Delta Lake table structure

The good news is that you can now time-travel in a Fabric Warehouse (and I will explain how in the next section). However, the true magic is happening with the Delta Lake table structure. I have explained in this article and video what is Delta Lake table structure, In short, it is a way that the data stored for tables in Fabric, and that structure has the ability to capture the changes and maintain those changes. Time travel is a feature available in delta lake tables.

This means that we could already do time travel even before this feature. However, that was only limited to Lakehouse tables using Notebook and writing the code for it, which I explained here. Now, the Fabric team made that also possible for Warehouse tables when querying using the SQL analytics endpoint, which is fantastic.

That is enough of background information, let’s see how it works.

Time Travel in Action

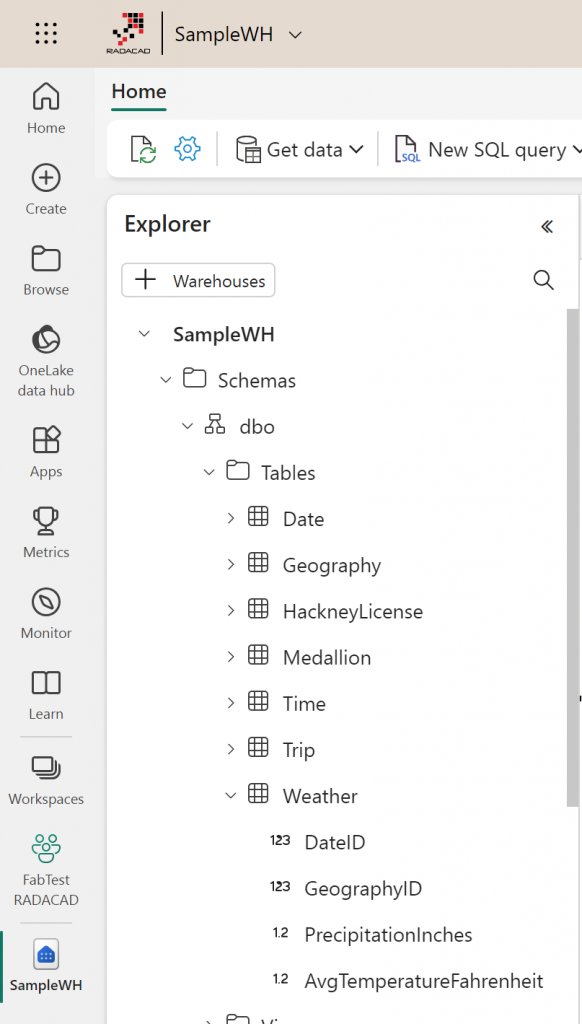

The screenshot below is an example of a Warehouse in Fabric.

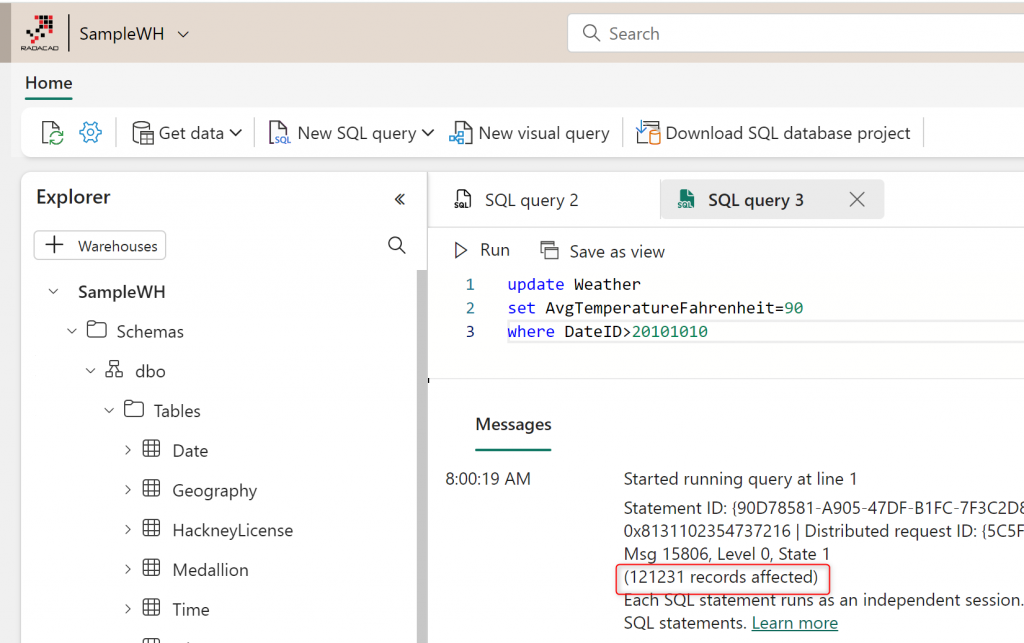

Let’s apply a change to this table using an update SQL command;

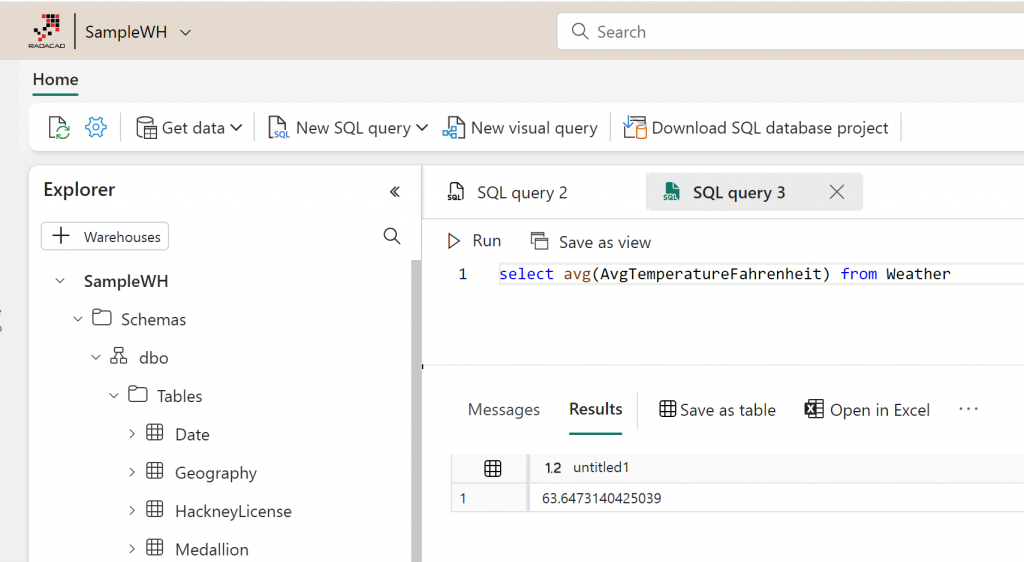

If I query anything from this table, I get the data as of now;

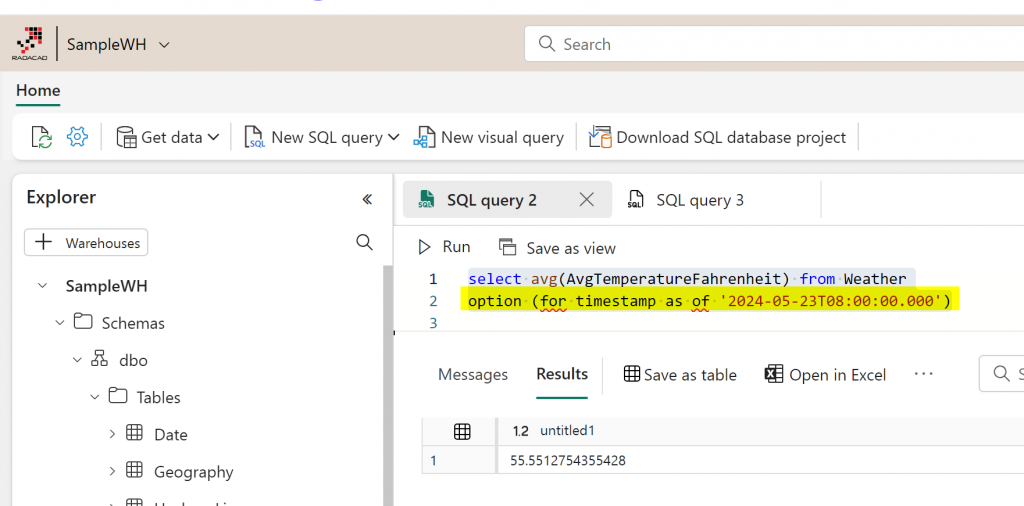

But if I want to travel in time and query it at a specific point in time, All I need to do is add an option code with the timestamp, such as the code below;

select avg(AvgTemperatureFahrenheit) from Weather

option (for timestamp as of '2024-05-23T08:00:00.000')The code above gives me the average of the AvgTemperatureFahrenheit as of 8 am on the 23rd of May. This is UTC time-based.

As you can see, the average value has changed. It was 55 at that time, but now it is 63. So, there have been changes in the value in between. These changes might have been sourced from a Data pipeline, Dataflow, or SQL command. No matter where the changes are coming from, they are captured by the warehouse tables, and we can query the data before or after the change.

This option/timestamp piece can be added at the end of all your queries, even if it joins multiple tables.

Retention Period

Time travel is possible because of the Delta Lake table structure underneath. Those tables have a retention policy for changes. The default retention period is 7 days. This means that if the data has changed, you can query those changes up until seven days ago.

Usually, the files in OneLake undergo a scheduled maintenance process, which only maintains the last seven days of changes. The VACCUM command can also be used to wipe out the changes from the past (which means time travel won’t be possible to those dates that the data has been vacuumed). I will write another article in the future about VACCUM and some other useful commands in table maintenance.

The 7 days period is the limit across the OneLake for the entire Fabric. You can’t change it as of now (you might be able to do it in the future). The main reason behind this is to keep the size of the data manageable as well as the performance. I do recommend backing up your Delta table files regularly so that in case you need to travel back in time for more than 7 days, there is a way to do that for you.

Summary

This was a short article explaining what time travel is in the Fabric warehouse, the use case scenarios, and how it can be used simply with a change in the SQL query. If you would like to learn more about Fabric objects and workloads related to this, I recommend the below articles explaining each of the topics in detail;